Alert! The Word That Can Turn Your Voice into the Key to Artificial Intelligence Fraud

With the rise of advanced artificial intelligence, phone scams have entered a new and alarming era. It’s no longer enough to simply ignore suspicious texts or strange emails — today, even your voice can be used against you. A few spoken words during a short call may give criminals everything they need to clone your identity and commit fraud without your knowledge.

Your Voice: A New Target for Cybercriminals

Your voice is more than just a personal trait — it’s now a valuable digital asset. AI technology can mimic your tone, accent, and even emotions with stunning accuracy. Cybercriminals record and replicate voices to carry out crimes such as identity theft, fake loan or bank approvals, and forged agreements. In this new world of voice cloning, a simple sentence can open the door to massive risks.

“Hackers now use advanced software to capture and clone real voices for fraud.”

The Danger of Saying “Yes”

One of the biggest dangers lies in one small word: “yes.” Scammers use recordings of your affirmative responses to authorize fake transactions or legal approvals — a tactic known as “yes fraud.” Once they capture your voice saying “yes,” they can use AI to manipulate it for voice authentication systems or recorded confirmations.

“Just one word like ‘yes’ can be recorded and reused by AI-driven fraudsters.”

What to do instead:

Avoid direct affirmatives like “yes” or “yeah.”

Use neutral responses or ask identifying questions such as:

“What’s the purpose of your call?”

“Who am I speaking with?”

“AI can now mimic your voice so precisely that it’s hard to tell real from fake.”

Even Simple Greetings Can Be Risky

It’s not just the word “yes” that can cause harm. Even everyday greetings like “hello” or “hey” can help scammers. Automated systems use those recordings to confirm that your number is active and that your voice matches a real person. Just answering a suspicious call may give criminals a verified voice sample for future scams.

Safer approach:

Let unknown callers speak first before responding.

Use cautious phrases like:

“Who are you trying to reach?”

“How can I assist you?”

“Even saying a simple ‘hello’ to an unknown caller can confirm your identity.”

How AI Makes Voice Cloning Possible

AI technology has made voice cloning incredibly easy. With just a few seconds of recorded audio, artificial intelligence can recreate your tone, rhythm, and speech pattern to sound nearly identical to you. Once that happens, scammers can impersonate you in countless ways, including:

Calling your friends or relatives to urgently request money.

Accessing bank accounts that use voice authentication.

Approving fake contracts or legal documents.

How to Protect Yourself

While technology continues to evolve, your best defense is awareness and caution. Follow these safety steps to protect your voice from AI-driven fraud:

-

Always verify a caller’s identity before sharing personal information.

Avoid participating in voice-based surveys or automated recordings.

Monitor your bank accounts for suspicious activity.

Block and report strange or persistent numbers.

Never share passwords, ID numbers, or banking details over the phone.

If a call feels suspicious or pressured — hang up immediately.

“Awareness and caution are your best defense against AI-powered voice scams.”

Final Thoughts

We live in an age where technology evolves faster than our ability to keep up. Your voice — once a simple form of communication — has become a vulnerable digital signature. Staying safe now means staying skeptical. Think carefully before speaking, especially with unknown callers.

Sometimes, the smartest thing you can say is nothing at all.

“Sometimes silence protects more than words ever could.”

6 habits that make older women look beautiful

The idea of beauty is one of those rare things in life that becomes more intriguing as time goes by. When we are young, beauty is a purely biological thing, something that happens because of our genetic makeup and our youthful, smooth skin. But as we age, so does our understanding of beauty. Not only does beauty not disappear; it changes, becoming more complex and profound. It evolves from an aesthetic aspect into a deeper notion.

Many women become elegant in a certain way. They develop an aura of quiet confidence, poise, and charisma that is unique to them and impossible to buy or copy. Their beauty doesn’t come as a result of trendy, costly procedures and treatments, but is the product of habits cultivated over many years.

Instead of seeking perfection, which is an impossible and ultimately tiresome goal by its very definition, it’s more realistic to focus on growth and self-respect.

The following is an analysis of several traits that make up a woman’s natural beauty as she matures, as well as the rationale behind why they work for her mind and body.

The Art of Posture and Intentional Movement

A person’s posture can say more before any hello than their actual words. Body language is perhaps the most primitive means of communication and conveys what the mind truly feels. Standing straight, keeping one’s shoulders relaxed instead of hunched up by the ears, and moving with purpose convey an impression of self-confidence.

Of course, as people age, some deterioration of posture occurs. This can be attributed to the weakening of muscles, decreased bone density, and the effects of years of poor posture, which often develop from sitting too long at a desk or staring at smartphones. However, recent discoveries in the science of “embodied cognition” have shown that posture does not only affect other people’s perception but also influences one’s inner state. When a person stands tall, they do not only “pretend” to be confident—they signal to their brain that they are comfortable and in control of their surroundings.

Women who pay attention to maintaining good posture look more lively and youthful, since they do not seem to “age down” into themselves. A smooth, stable walking pattern, together with an upright posture, helps create a sense of elegance that has nothing to do with what brand name one wears or how professionally one’s make-up is applied.

Radical Consistency in Self-Care

Good skin is not about an elaborate and lengthy nighttime regimen of cutting-edge ingredients. Instead, dermatological studies continually emphasize one simple yet critical truth: consistency wins over complexity. Women who radiate health despite their advanced age are often those who have stopped playing around with each new trend and developed a trustworthy and basic routine.

Skincare for graceful aging can be simplified to the three core steps: cleansing, moisturizing, and protection. In particular, the latter step is proven to be crucial to prevent premature aging of the skin. It is believed that 80% to 90% of visible signs of skin aging, such as wrinkles, dryness, and uneven skin tone, are due to excessive exposure to the sun. For instance, women who apply a daily layer of SPF for twenty years differ noticeably from those who only do so when going to the beach.

The next pillar is moisturization. As you get older, your skin barrier weakens, becoming less effective at retaining lipids and moisture. By hydrating the skin, you support this barrier, which keeps the skin soft, glowing, and more resistant to damage from external factors. It’s not about how expensive the jar is, it’s about consistency. These women care for their skin as an investment, not as an emergency that requires miracle fixes.

Personal Style Over Fleeting Trends

There is a vast difference between being “fashionable” and “having style.” The former dictates what one should wear according to fashion industry standards each month, while the latter is choosing to wear clothes that define one’s identity. In the development of one’s sense of beauty, many ladies experience a significant boost in confidence once they cease trying to fit in with fashion standards tailored to adolescents and begin building an individual aesthetic reflective of who they are now.

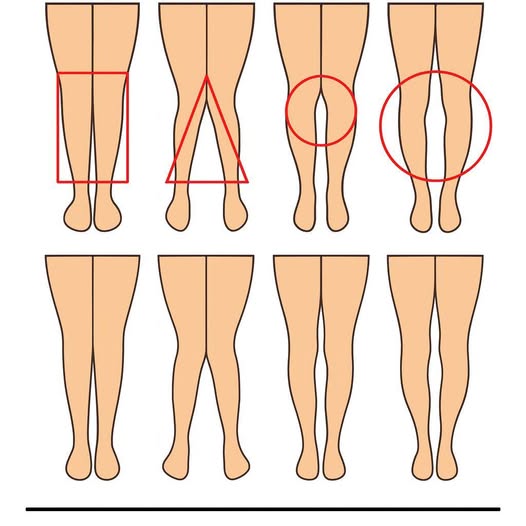

It is important to note that this is not about one’s selfish interests but rather a phenomenon known as “enclothed cognition.” The hypothesis posits that the clothing one wears can actually affect their psychology. When women dress themselves up in clothes that suit their body type, make them feel comfortable, and reflect their character.

As women age and become unique in their looks, they usually go for clothing that complements their body and accentuates their facial features rather than concealing their true beauty by wearing clothes that are too big for them or too small. Women who have unique looks usually become experts at color matching. They know what colors bring out the best in them and which colors are just not flattering. The reason why these women choose such a trend is not to attract attention or to be “on trend.” It is all about being true to themselves.

The Softening of Expressions

A smile is arguably one of the most universally appealing features a human being can possess. This feature provides instant appeal and warmth, making all conversations more approachable. However, aside from the socially beneficial aspect, there are physical effects when it comes to using one’s facial expressions consistently.

The face acts as an imprint of the most common emotional responses of a person. Constant tension or frowning can result in a face that has a permanent “hardened” look to it. Alternatively, by practicing keeping the facial expression relaxed, softening the jaw line, brows, and keeping up a friendly disposition, women actually experience aging differently.

It seems there is also an interesting “feedback loop” at play here. According to research, the simple act of smiling, whether or not it is a conscious process as opposed to an involuntary one, tends to cause the brain to produce neurotransmitters such as dopamine and serotonin. Thus, by ensuring that they maintain smiles, these ladies ensure that they continue to be happy and in good moods, thereby being more open to interaction and appearing more vibrant overall. While this may be attributed to them having fewer lines on their faces, the reason behind their lack of wrinkles is really that they smile in “happy” places.

Unsplash

Cultivating a “Lively” Mind

As we already mentioned, beauty cannot only be understood on the surface level since it has something to do with the “pilot” of our organism. Curiosity and activity of the mind create that special sparkle in the eyes and that particular zest of speech. We have all known young people who appear old since they did not learn anything new, while people over 80 can look young because they continue being interested in what is happening around them.

The scientific study of cognitive health shows that being actively engaged in thinking and learning (by reading books, learning new languages, communicating with other people, or simply solving puzzles) helps preserve brain flexibility and emotional stability. Mental activity makes our personality livelier.

A positive attitude definitely has a big part to play here too. Although getting older means you will inevitably experience things like loss and change, being able to maintain a positive outlook can help slow down your aging process. Stress has long been shown to accelerate the aging process at a cellular level. When women think about growth, exploration, and gratitude, they have a certain lightness of spirit that makes them more engaging and appealing.

Unsplash

Movement as Self-Care, Not Punishment

Exercise is always advertised as a tool to “fix” our body, yet older ladies who are energetic about aging see exercise as a necessity. Elderly women don’t train to achieve an ideal physical appearance or to compensate for eating certain foods, it simply makes them feel lively.

According to researchers, moderate physical activities are more valuable compared to sporadically performed and intense exercises. Jogging, stretching, yoga, and some exercises contribute to the improvement of blood circulation; therefore, the skin receives oxygen and nutrients that enhance its beauty. Exercise positively affects joint condition and hormone levels, which are vital to sustaining good mood and proper sleep.

Of course, exercise promotes the maintenance of muscle mass. Since our muscles tend to decrease their mass and size when aging (it is called sarcopenia), having at least some muscle mass is important to have an attractive appearance and physical capabilities. In other words, if a woman perceives exercise as self-respect, she will perform her workouts regularly and develop a healthy lifestyle. As a result, one would see that an elderly woman is active and energetic rather than exhausting herself at the gym.

Unsplash

Conclusion

Looking beautiful at any age isn’t about trying to turn back the clock. Looking beautiful at any age isn’t about trying to turn back the clock. It’s about alignment. It’s the sweet spot where how you feel on the inside, how you care for your body, and how you present yourself to the world all match.

What stands out most in women who age gracefully isn’t the absence of wrinkles or a specific dress size. It’s their presence. They seem comfortable in their own skin. They’ve built habits that support their well-being, and over time, those habits become visible in the way they stand, the way they listen, and the energy they bring into a room.

Confidence, consistency, and self-acceptance create a kind of beauty that doesn’t fade, it’s the only kind that actually improves with time. In the end, the most powerful transformation doesn’t come from a product; it comes from the quiet realization that taking care of yourself is one of the most meaningful things you can do.